Movies123 is the ultimate destination for those seeking to watch free movies online. With the official website of 123movies, you can access a vast...

Movies123 Alternatives 20 Best Sites Watch Movies Free

Movies123 is the ultimate destination for those seeking to watch free movies online. With the official website of 123movies, you can access a vast...

LiveTV is a free website where you can watch sports and games from all over the world as they happen. LiveTV lets you watch sports and games from all over the world. It...

Looking for a way to enjoy unblocked gaming and entertainment online? Look no further than ClassWork.cc. This leading online resource offers a wide range of...

Best StreamEast Live Alternatives Free Sports Streaming Sites: StreamEast’s entertainment service broadcasts live sports events for free. There are...

SkinPort is the ultimate marketplace for CS:GO (Counter-Strike: Global Offensive) skins, offering gamers a secure and user-friendly platform to buy and sell...

Are you tired of dealing with cluttered and disorganized files on your Android device? Look no further! Introducing Cx File Explorer, the versatile file...

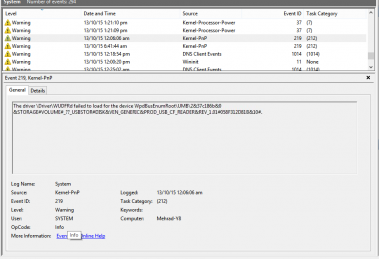

Microsoft will begin offering Windows 10 Update v1903 shortly now, and I am confident that the majority of you have to be excited about installing it as soon...

This post will explain Benefits of social media for business. Remember when individuals stated social media was simply a trend? Its power has become...

Best and most authentic reason to call a Locksmith Services will be described in this article. Being locked out of your house or place of business can be very...

Welcome to AniWave, the ultimate portal for anime lovers. If you’re a fan of anime series and movies, you’ve come to the right place. AniWave...

Striven ERP is a resource planning software that assists firms in bringing their distant workers together and streamlining operations. Employees may work...